Every Robot That Can 'Figure Things Out' Was Taught How to Figure Things Out

Physical Intelligence says its new model handles tasks it was never taught. That sentence is doing a lot of work.

Photo · TechCrunch

There's a particular flavor of press release language that the AI industry has perfected over the last few years, and Physical Intelligence's announcement of π0.7 is a clean specimen. The new model, the company says, can figure out tasks it was never taught. TechCrunch ran with it, framing the capability as an early but meaningful step toward the long-sought goal of a general-purpose robot brain.

Meaningful step. Long-sought goal. Never taught.

Every word in that construction is technically defensible and collectively kind of slippery.

The Semantics Are the Product

Here's the thing about "never taught": it depends entirely on how you define the unit of teaching. A model trained on vast amounts of robotic interaction data, human demonstration footage, and simulation runs hasn't been taught Task X specifically — but it has been marinated in the general shape of how tasks work, how objects behave, how hands move through space. Calling that "never taught" is like saying a chef who went to culinary school for a decade has never been taught a specific dish they improvise on the fly. Technically true. Meaningfully misleading.

The framing of general-purpose is doing similar work. General-purpose sounds like a robot that can handle your laundry, fix your gutters, and make a reasonable cup of pour-over. What it more likely means, in the honest engineering sense, is a model that generalizes better than its predecessors across a wider distribution of tasks — which is genuinely impressive and also not the same thing at all.

Physical Intelligence describes π0.7 as an early step. That's the escape hatch. It's always an early step until suddenly it isn't, and by then the original framing has calcified into received wisdom.

What the Coverage Reveals

What's interesting isn't that Physical Intelligence made these claims. Startups stake out ambitious territory — that's the game. What's interesting is that a piece of coverage at TechCrunch transmits the company's own language almost as-is, with the "early but meaningful step" framing sitting in the piece like it was independently assessed.

The writer at TechCrunch is covering a real thing. Physical Intelligence is a real company with real researchers working on hard problems. π0.7 may well represent genuine progress in robot generalization. None of that is in dispute. What's worth noticing is how smoothly the vocabulary of AI ambition moves from company communication into editorial framing — "never taught," "general-purpose," "long-sought goal" — and how little friction there is between the press release and the piece.

This is the cycle. A capability gets announced. The language of the announcement becomes the language of the coverage. The coverage becomes the ambient understanding. By the time anyone interrogates the semantics, the next model has already shipped and the conversation has moved on.

There's a version of this story that asks: what exactly was π0.7 trained on, how much data, from what sources, and what does "figure out" actually look like in a controlled demo versus an uncontrolled environment? Those are the questions that would tell you whether this is a step or a stride.

Maybe the answers are impressive. Maybe they're not. The point is the coverage doesn't get there — and in 2025, that gap between the claim and the interrogation is where most of the interesting stuff lives.

General-purpose is a destination, not a feature. Announcing you're headed there isn't the same as arriving.

Keep reading tech.

Both Sides of the AI Jobs Debate Are Solving for the Wrong Person

One camp wants standardization, another predicts creative destruction — neither is talking about the warehouse worker who isn't getting reskilled into anything.

People Tripled Their Traffic to a Search Engine That Does Less

After Google's May I/O announcements, users didn't ask for better AI. They asked for none.

Three Companies Posted the Same Four Words. Someone Has to Pay for That Bet.

Nvidia, Microsoft, and Arm didn't just tease a chip — they jointly signed a vision statement, and the bill comes due the moment you ask what local AI is actually for.

From the other desks.

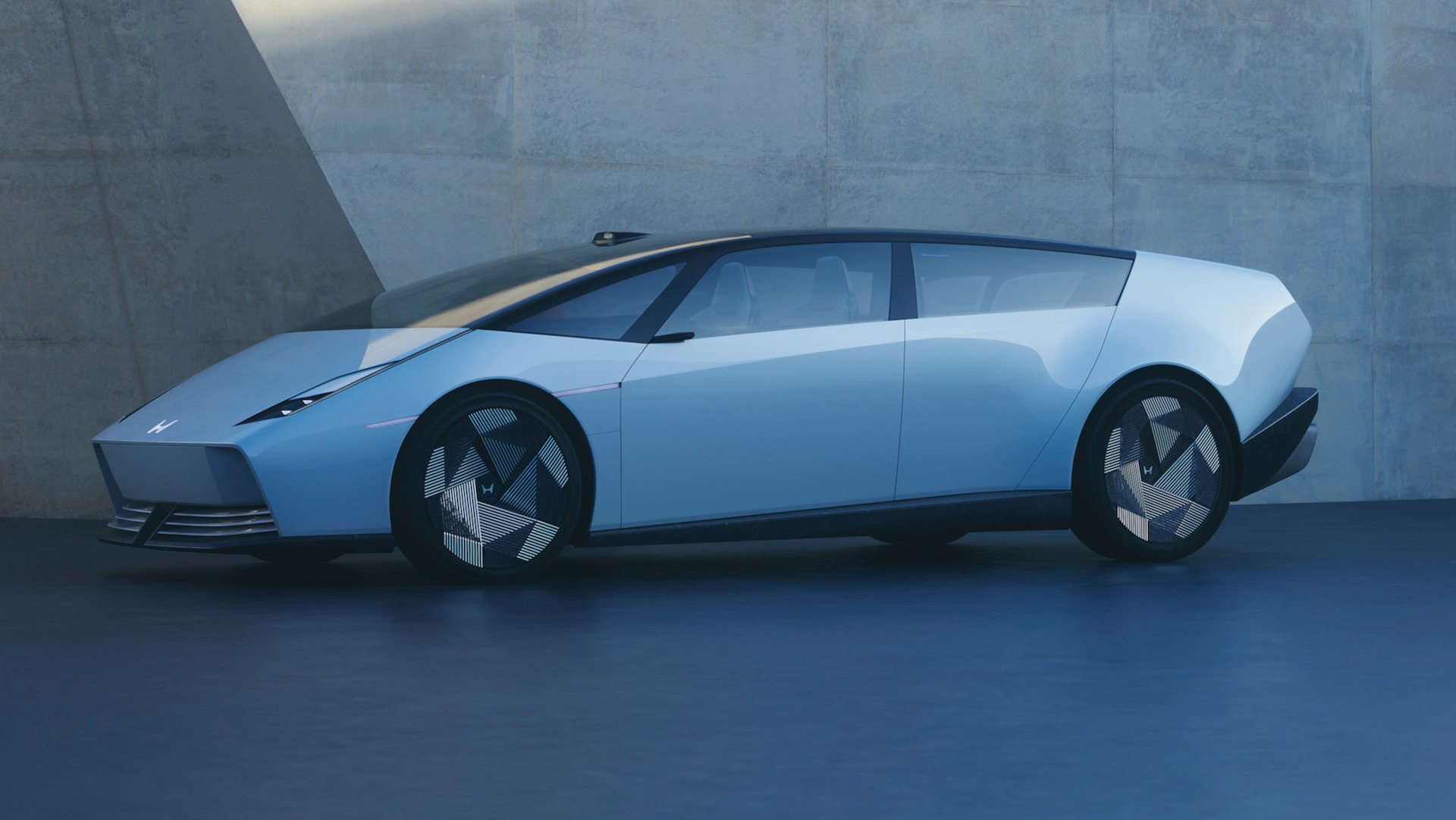

Ferrari Built Something Ugly. Nobody Left the Room.

When a car that breaks every visual rule still sells itself before the doors open, you have to wonder what the rules were ever protecting.

Skate Culture Stopped Knocking and Walked Through the Front Door

Palace, Nike, and England didn't blur the line between subculture and national institution — they confirmed it no longer exists.

A Million People Came Anyway

Arsenal won the league, lost to PSG, and still filled the streets — which tells you everything about what a title means when the bigger stage is already gone.