David Silver Raised $1.1 Billion to Prove Human Feedback Was Always the Ceiling

Ineffable Intelligence isn't just a new lab — it's a public admission that the data we've been feeding AI was the problem all along.

Photo · TechCrunch

There's a particular kind of confidence required to name your company Ineffable Intelligence and then raise $1.1 billion on the thesis that everyone else in your industry has been doing it wrong.

David Silver, the former DeepMind researcher behind AlphaGo, apparently has that confidence. His new British AI lab — founded only a few months ago — just closed at a $5.1 billion valuation. The pitch, as Wired frames it, is that he wants to build AI "superlearners." The method, as TechCrunch reports it, is building AI that learns without human data.

Sit with that second part for a moment.

The Admission Nobody Wanted to Make

The dominant playbook of the last several years has been: scrape the internet, hire humans to rate outputs, repeat at scale. RLHF — reinforcement learning from human feedback — became the invisible spine of nearly every major model. The products got better. The demos got impressive. Everyone called it progress.

What Silver appears to be arguing, by the very existence of Ineffable Intelligence, is that human feedback was never the accelerant. It was the speed limit. That we built a generation of models shaped by human preference data, and in doing so, we built a ceiling directly into the architecture.

This is not a subtle critique. It's the kind of claim that, if right, restructures everything — the investment theses, the training pipelines, the talent hierarchies. And if wrong, it's a $1.1 billion lesson.

The fact that investors handed over that money anyway tells you something about where the room's head is at right now. Not everyone believes Silver is right. But enough people are nervous he might be.

What AlphaGo Already Said

Here's the thing: Silver has run this experiment before, at smaller stakes. AlphaGo — and later AlphaZero — learned to play games at a superhuman level without being trained on human gameplay. The system played itself into competence, discovered strategies human players had never conceived, and beat the best humans alive. It didn't learn from us. It learned around us.

The question Ineffable Intelligence is now asking, at a billion-dollar scale, is whether that approach generalizes. Whether the same logic that worked in a bounded game environment can work in the open, messy, high-dimensional space where AI is actually supposed to be useful.

Wired notes that Silver thinks AI is currently on the wrong path. That's the kind of line that sounds like startup positioning until you remember the person saying it helped build one of the most important AI systems of the last decade without ever asking a human to rate its moves.

The Cycle, Again

I've watched enough of these announcements land to know the pattern. A credentialed researcher leaves a major lab, raises an implausible amount of money in a short window, and frames their work as a correction to everything that came before. Sometimes they're right. Sometimes the money outlasts the idea by just enough to make it look like progress.

What's different here — genuinely different — is that the critique isn't about compute, or architecture, or model size. It's about the nature of the training signal itself. Silver isn't saying we need more data. He's saying the data we've been using was always a compromise, and we've been optimizing inside a constraint we never had to accept.

That's a harder argument to dismiss. Not because it's certainly correct, but because AlphaGo already made it once, quietly, by winning.

Ineffable Intelligence has $1.1 billion and a name that dares you to take it seriously. The industry spent years calling human feedback a feature. Silver is betting it was always a bug.

Keep reading tech.

Elon Musk Filed a Lawsuit. OpenAI Settled a Different One.

While Musk argued about betrayed ideals in a federal courtroom, OpenAI was quietly renegotiating the deal that made those ideals irrelevant.

OpenAI Needed the Money. Now It Doesn't Need the Deal.

Microsoft's $13 billion bought a head start, not a leash — and OpenAI just made that official.

Valve Shipped a Third of an Ecosystem and Called It Launch Day

The Steam Controller is real, the Steam Machine isn't, and somehow that tells you everything about where gaming hardware is headed.

From the other desks.

Bowling Green Doesn't Care About Woking Anymore

The Corvette ZR1X just ran a lap record that cost McLaren a million dollars to set.

Six New Watches Walk Into a Room. Only One Costs What You Think.

Across six new releases, the watch industry is quietly rewriting who the product is actually for.

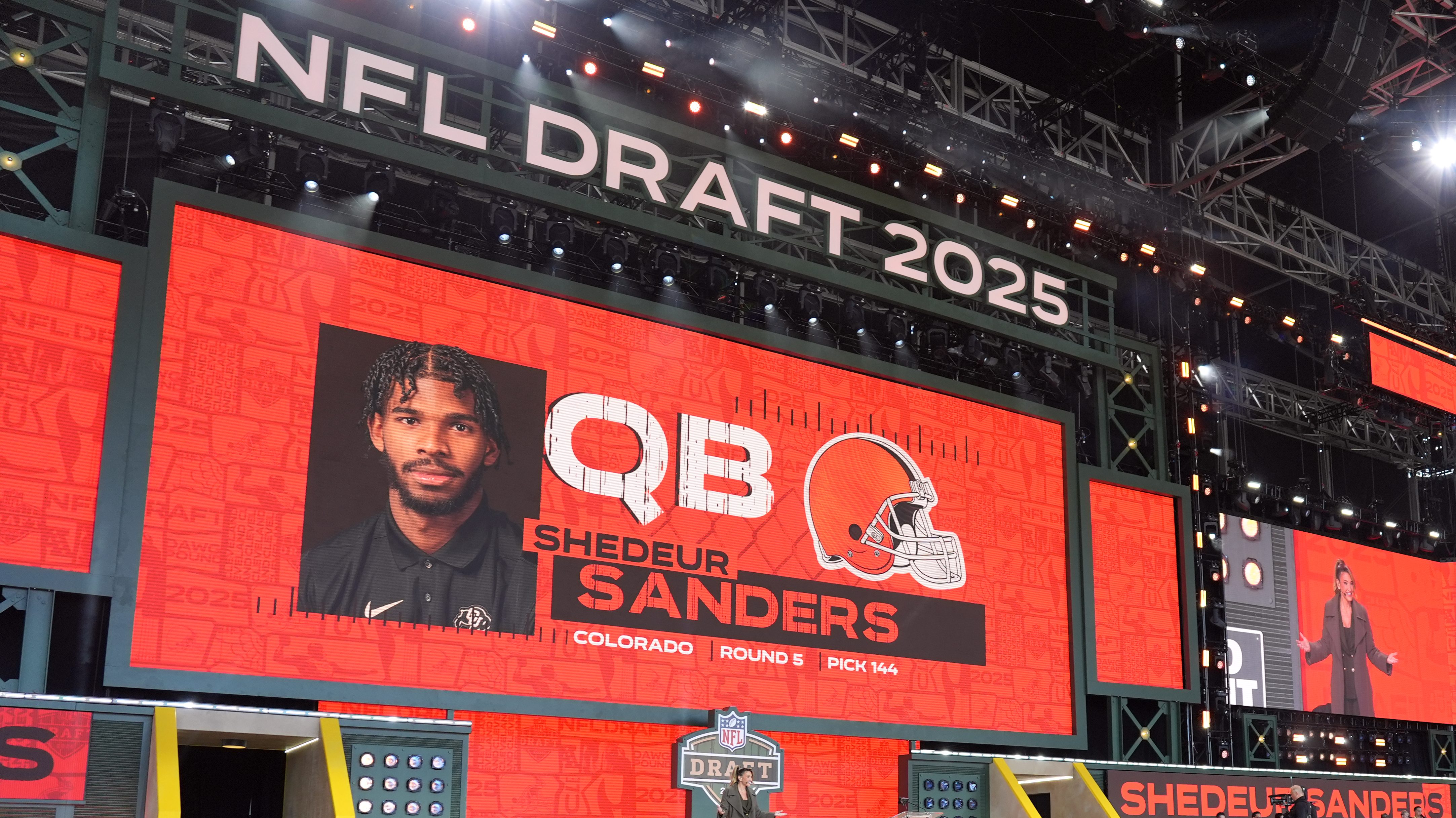

Every Draft Class Inherits the Last One's Ghosts

Shedeur Sanders fell in 2025. The league is still rearranging furniture because of it.